In part VI of this series on ODEs and SDEs in machine learning, we saw that SDEs are straightforward to solve when both the drift and diffusion terms are functions of the independent variable $t$ but not the dependent variable $x$. This decouples the two terms and allows us to solve the SDE by directly integrating. This led to a discussion of what it means to integrate with respect to noise and to a description of the Itô integral.

In part VII of the series (this article) we consider the more complex case where the drift and diffusion terms are functions of both the independent variable $t$ and the dependent variable $x$. Here, one possible approach is to use a similar strategy to that used for linear inhomogeneous ODEs; we make a change in variable to remove this undesirable dependence, solve the equation in the new variable, and then convert back to the original variable.

Changing the variable for SDEs is not as straightforward as it is for ODEs, but Itô’s famous lemma describes how to make such changes and we derive this rule. We then apply Itô’s lemma to find closed-form solutions for the geometric Brownian motion and Ornstein-Uhlenbeck process SDEs.

Solving SDEs by a change in variables

Consider the following two homogeneous SDEs with constant coefficients:

\begin{eqnarray}\label{eq:ode6_linearhomog_two}

dx &=& \mu x\cdot dt + \sigma x \cdot dw,\nonumber \\

dx &=& -\gamma x\cdot dt + \sigma \cdot dw,

\tag{1}

\end{eqnarray}

which are sometimes referred to as having multiplicative and additive noise, respectively. The first of these equations is referred to as geometric Brownian motion and is used to model the evolution of financial assets. The second of these equations is the Ornstein-Uhlenbeck process which is used in diffusion models in machine learning. Both of these were discussed in part V of this series of articles. In the former case, both the drift and diffusion term depend on the dependent variable $x$. In the latter case, only the drift term does. In either case, this dependence means that we cannot directly integrate the equation to solve the SDE as we did in the previous article in this series.

It’s not immediately obvious how to solve these equations, but we can draw inspiration by considering how to solve the the related ODE:

\begin{equation}

dx = \mu x\cdot dt.

\tag{2}

\end{equation}

One approach to this ODE is to make the change of variables $u=\log[x]$. Noting that $du = (1/x) dx$, we get the simpler ODE:

\begin{equation}\label{eq:ode6_change_var}

du = \mu\cdot dt,

\tag{3}

\end{equation}

which has solution:

\begin{equation}

u_t = C + \mu t,

\tag{4}

\end{equation}

where $C$ is the integration constant. Substituting in the definition $u=\log[x]$, we get:

\begin{equation}

\log[x] = C + \mu t,

\tag{5}

\end{equation}

which can be re-arranged to give:

\begin{equation}

x = x_0\exp[\mu t],

\tag{6}

\end{equation}

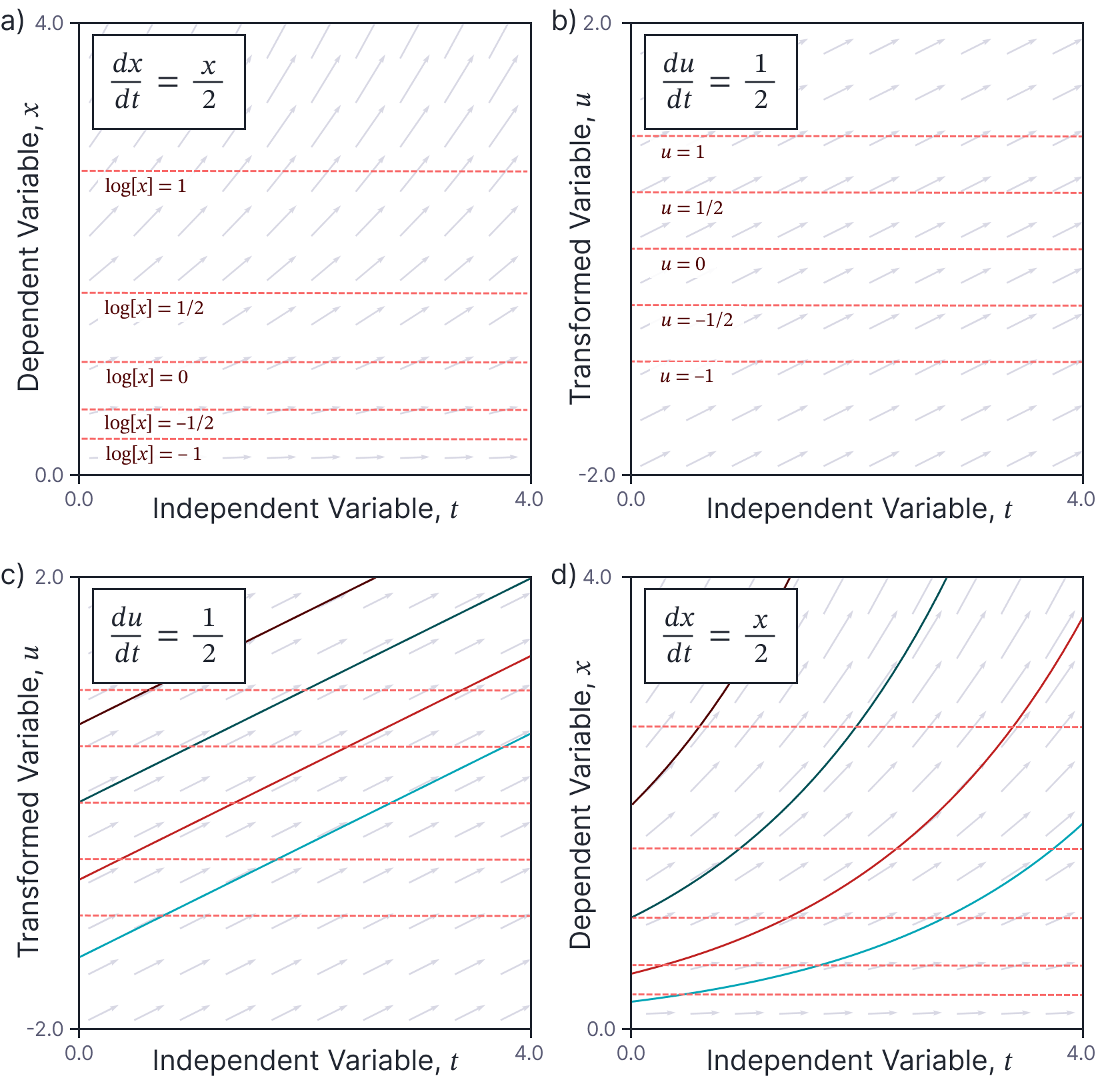

where $x_0=\exp[C]$. This workflow is illustrated in figure 1.

Figure 1. Solving an ODE by changing the variable. a) The right-hand side of this ODE is a function of the dependent variable $x$. b) We can simplify this ODE by making the change of variable $u=\log[x]$. This maps the red lines in panel (a) to the red lines in panel (b). The right-hand side does not depend on the new dependent variable $u$. c) Solving the new ODE is easy (four solutions shown with different initial conditions). d) We then transform the solution back to the original space to solve the original equation. This article will use a similar approach to solve SDEs.

Perhaps we could try a similar approach to the linear homogeneous SDEs in equation 1? In order to apply such an approach, we first need to understand how changes in variables work for stochastic integrals. Such changes are calculated using Itô’s lemma.

Itô’s lemma

In solving the above ODE, we made a change of variable $\mbox{u}[x]$ and then wrote a new ODE that described how $\mbox{u}[x]$ evolved (equation 3). Itô’s lemma generalizes this technique. Given an SDE of the standard form:

\begin{equation}\label{eq:ode6_general_sde3}

dx = \mbox{m}[x,t] dt + \mbox{s}[x,t] dw,

\tag{7}

\end{equation}

Itô’s lemma states that a function $\mbox{u}[x,t]$ of that variable evolves as:

\begin{equation}

du[x,t] = \left(\frac{\partial u}{\partial t} + \frac{\partial u}{\partial x}\mbox{m}[x,t] + \frac{1}{2} \frac{\partial^{2} u}{\partial x^{2}}\mbox{s}[x,t]^{2} \right)dt + \frac{\partial u}{\partial x} \mbox{s}[x,t]dw.

\tag{8}

\end{equation}

In the following sections, we will review the deterministic part of this formula (i.e., if we just had the first term on the right hand side of equation 7) and then examine what happens for a pure stochastic differential equations (i.e., only the second term). Then we’ll combine these results to reproduce the formula above.

Deterministic term

Let’s start by considering how a function $\mbox{u}[x,t]$ evolves when we have a standard ODE consisting of just the first term from equation 7:

\begin{equation}

dx = \mbox{m}[x,t] dt.

\tag{9}

\end{equation}

Dropping the limit notation and the arguments to the function $\mbox{m}[\bullet, \bullet]$ to simplify notation, we can approximate this equation by:

\begin{equation}\label{eq:sde_ito_ode_delta}

\Delta x = \mbox{m}\Delta t.

\tag{10}

\end{equation}

where $\Delta x$ is a small change in the dependent variable and $\Delta t$ is a small change in the dependent variable.

Now consider a function $\mbox{u}[x,t]$. We can approximate a change in this function with the Taylor expansion:

\begin{equation}

\Delta u \approx \frac{\partial u}{\partial x}\Delta x +\frac{\partial u}{\partial t}\Delta t + \frac{1}{2}\frac{\partial ^{2}u}{\partial x^{2}}\Delta x^2+ \frac{1}{2}\frac{\partial ^{2}u}{\partial t^{2}}\Delta t^2+ \frac{\partial^{2}u}{\partial x\partial t} \Delta x\Delta t\ldots

\tag{11}

\end{equation}

and then substitute in the expression for $\Delta x$ in equation 10 to get:

\begin{equation}

\Delta u \approx \frac{\partial u}{\partial x}m\Delta t+\frac{\partial u}{\partial t}\Delta t + \frac{1}{2}\frac{\partial ^{2}u}{\partial x^{2}}m^{2}\Delta t^2+\frac{1}{2}\frac{\partial ^{2}u}{\partial t^{2}}\Delta t^2+ \frac{\partial^{2}u}{dxdt} m\Delta t^2\ldots

\tag{12}

\end{equation}

We now note that as $\Delta t\rightarrow 0$, the terms of order $\Delta t^{2}$ and higher become very small relative to those in $\Delta t$ and so we can drop them to give:

\begin{equation}

\Delta u \approx \frac{\partial u}{\partial x} m \Delta t+\frac{\partial u}{\partial t}\Delta t.

\tag{13}

\end{equation}

Reintroducing the limit notation and the arguments, we get

\begin{equation}

d\mbox{u}[x,t] = \left(\frac{\partial u}{\partial t}+\frac{\partial u}{\partial x} \mbox{m}[x,t]\right) dt.

\tag{14}

\end{equation}

Stochastic term

Now let’s apply the same approach to a pure SDE consisting of just the second term from equation 7:

\begin{equation}

dx = \mbox{s}[x,t] dw.

\tag{15}

\end{equation}

Once more, we drop the limit notation and the arguments to the function to get:

\begin{eqnarray}

\Delta x &=& \mbox{s}\cdot \Delta w\nonumber \\

&=& \mbox{s} \sqrt{\Delta t}\cdot \epsilon,

\tag{16}

\end{eqnarray}

where $\Delta w$ is a small change in the Wiener process. Between the two lines, we have used the original definition of $\Delta w$ as being composed of a sample $\epsilon$ from the standard normal distribution multiplied by $\sqrt{\Delta t}$. We now apply the same approach as in the previous section, but we’ll see that we get very different results; the second order terms from the Taylor expansion do not all conveniently disappear because we have a term that involves $\sqrt{\Delta t}$ and even when squared this is still important.

As for the deterministic case, a small change $\Delta u$ of a function $\mbox{u}[x,t]$ can be approximated by the Taylor expansion:

\begin{equation}

\Delta u \approx \frac{\partial u}{\partial x}\Delta x +\frac{\partial u}{\partial t}\Delta t + \frac{1}{2}\frac{\partial^{2}u}{\partial x^{2}}\Delta x^2+ \frac{1}{2}\frac{\partial^{2}u}{\partial t^{2}}\Delta t^2+ \frac{\partial^{2}u}{dxdt} \Delta x\Delta t \ldots

\tag{17}

\end{equation}

Now we substitute in the expression for $\Delta x$ to get:

\begin{equation}

\Delta f \approx \frac{\partial u}{\partial x}s\sqrt{\Delta t}\cdot\epsilon + \frac{\partial u}{\partial t}\Delta t+ \frac{1}{2}\frac{\partial^{2}u}{\partial x^{2}}s^{2}\Delta t\cdot \epsilon^{2} + \frac{1}{2}\frac{\partial^{2}u}{\partial t^{2}}\Delta t^2 + \frac{\partial^{2}u}{\partial x\partial t} \Delta t^{3/2}\cdot \epsilon \ldots

\tag{18}

\end{equation}

Finally, we consider what happens as $\Delta t\rightarrow 0$. The first thing to notice is that the last two terms are of order $\Delta t^{2}$ and $\Delta t^{3/2}$ respectively and so they will become small much faster than the other terms and we can drop them to get:

\begin{equation}

\Delta f \approx \frac{\partial u}{\partial x}s\sqrt{\Delta t}\cdot \epsilon + \frac{\partial u}{\partial t}\Delta t+ \frac{1}{2}\frac{\partial^{2}u}{\partial x^{2}}s^{2}\Delta t\cdot \epsilon^{2} .

\tag{19}

\end{equation}

We’ll now consider what happens to each of the terms on the right hand side in turn as $\Delta t\rightarrow 0$. The argument $s\sqrt{\Delta t}\cdot \epsilon$ of the first term is just the definition of $\Delta w$ and so it will be come $dw$. The second term $\Delta t$ does not contain any stochastic elements and moves to the limit as usual to become $dt$.

The third term $s^{2}\Delta t\cdot \epsilon^{2}$ looks like it is stochastic too. However, we’ll show that as $\Delta t\rightarrow 0$, it becomes deterministic. The first moment of this term is:

\begin{eqnarray}

\mathbb{E}\bigl[s^{2}\Delta t\cdot \epsilon^{2} \bigr] &=& s^{2}\Delta t \cdot \mathbb{E}\bigl[\epsilon^{2}\bigr]\nonumber \\

&=& s^{2}\Delta t \cdot 1 \nonumber \\&=& s^{2}\Delta t,

\tag{20}

\end{eqnarray}

where we have used the fact that the variance of the standard noise term $\epsilon$ is one. The second moment is:

\begin{eqnarray}

\mathbb{E}\bigl[s^{4}\Delta t^2\cdot \epsilon^{4}\bigr] &=& s^{4}\Delta t^{2} \cdot \mathbb{E}\bigl[\epsilon^{4}\bigr]\nonumber \\

&=& s^{4}\Delta t^{2} \cdot 2 \nonumber \\ &=& 2s^{4}\Delta t^{2},

\tag{21}

\end{eqnarray}

where we have used the fact that $\mathbb{E}[\epsilon^{4}]$ is the second moment of a chi-square distribution with one degree of freedom, which has the value 2.

Now consider what happens as $\Delta t\rightarrow 0$. The first moment is of order $\Delta t$ and so it remains but the second moment is of order $\Delta t^{2}$ and so as the time increment becomes smaller this becomes zero. The result is that (somewhat surprisingly) this term becomes deterministic and takes the value $s^{2}dt$ as $\Delta t\rightarrow 0$.

Having considered what happens to each of the terms in the limit, we can now put this all together to write:

\begin{equation}

du =\frac{\partial u}{\partial x}s dw + \frac{\partial u}{\partial t}dt+ \frac{1}{2}\frac{\partial ^{2}u}{\partial x^{2}}s^{2}dt.

\tag{22}

\end{equation}

Reintroducing the arguments and collecting terms we get:

\begin{equation}

du[x,t] =\left(\frac{\partial u}{\partial t}+ \frac{1}{2}\frac{\partial ^{2}u}{\partial x^{2}}\mbox{s}[x,t]^{2}\right)dt +\frac{\partial u}{\partial x}\mbox{s}[x,t] dw.

\tag{23}

\end{equation}

Combining results

In the last two sections we saw that saw how a function $\mbox{u}[x,t]$ evolved under the ODE $dx=\mbox{m}[x,t]dt$:

\begin{equation}

du[x,t] = \left(\frac{\partial u}{\partial t}+ \frac{\partial u}{\partial x} \mbox{m}[x,t]\right) dt.

\tag{24}

\end{equation}

and how a function $\mbox{u}[x,t]$ evolved under the SDE $dx=\mbox{s}[x,t]dw$:

\begin{equation}

du[x,t] =\left(\frac{\partial u}{\partial t}+ \frac{1}{2}\frac{\partial ^{2}u}{\partial x^{2}}\mbox{s}[x,t]^{2}\right)dt +\frac{\partial u}{\partial x}\mbox{s}[x,t] dw.

\tag{25}

\end{equation}

Itô’s lemma follows by superimposing these two results. If we followed exactly the same process of taking the Taylor expansion and considered each term for the general Itô process of the form $dx=\mbox{m}[x,t]dt+\mbox{s}[x,t]dw$ we would retrieve:

\begin{equation}

du[x,t] = \left(\frac{\partial u}{\partial t} + \frac{\partial u}{\partial x}\mbox{m}[x,t] + \frac{1}{2} \frac{\partial^{2} u}{\partial x^{2}}\mbox{s}[x,t]^{2} \right)dt + \frac{\partial u}{\partial x} \mbox{s}[x,t]dw,

\tag{26}

\end{equation}

as required.

Geometric Brownian motion

We motivated the development of Itô’s lemma by the need to make a change in variables to solve the following SDE:

\begin{equation}

dx = \mu x dt + \sigma x dw,

\tag{27}

\end{equation}

which is commonly referred to as geometric Brownian motion. We now return to this line of argument. Consider making the change of variables $\mbox{u}[x,t] = \log[x]$. When we apply Itô’s lemma:

\begin{equation}

du = \left(\frac{\partial u}{\partial t} + \frac{\partial u}{\partial x}\mbox{m}[x,t] + \frac{1}{2} \frac{\partial^{2} u}{\partial x^{2}}\mbox{s}[x,t]^{2} \right)dt + \frac{\partial u}{\partial x} \mbox{s}[x,t]dw,

\tag{28}

\end{equation}

we yield:

\begin{equation}

du = \left(0 + \frac{1}{x}\cdot \mu x + \frac{1}{2} \cdot-\frac{1}{x^{2}}\cdot\sigma^{2}x^{2} \right)dt + \frac{1}{x} \cdot \sigma x dw,

\tag{29}

\end{equation}

which simplifies to:

\begin{equation}\

du = \left(\mu-\frac{1}{2}\sigma^{2}\right) dt + \sigma dw.

\tag{30}

\end{equation}

This equation has drift and diffusion terms that are constant in both $x$ and $t$, and so it can be solved by directly integrating the two individual terms (see part VI of this series of articles) to give:

\begin{equation}

u = C + \left(\mu-\frac{1}{2}\sigma^{2}\right)t + \sigma W_{t}

\tag{31}

\end{equation}

where $C$ is an arbitrary integration constant.

Finally, we change variables back from $\textrm{u}[x,t]$ to $x$. If $\textrm{u}[x,t]=\log[x]$ then $x = \exp[\textrm{u}[x,t]]$ so we have:

\begin{equation}

x = x_{0}\exp\left[\left(\mu-\frac{1}{2}\sigma^{2}\right)t + \sigma W_{t}\right]

\tag{32}

\end{equation}

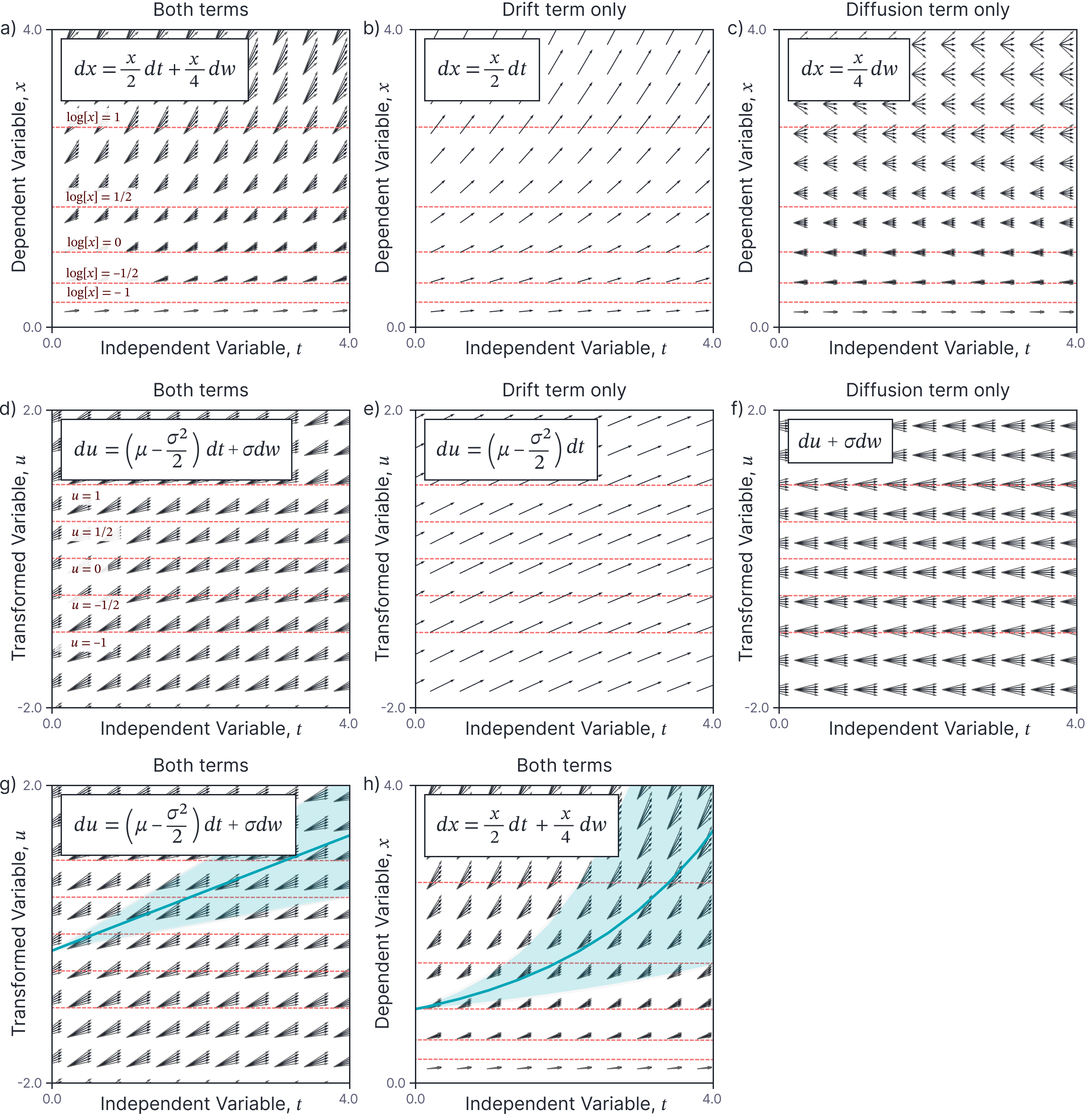

where $x_{0}= \exp[C]$. This process is illustrated in figure 2.

Figure 2. Solving Geometric Brownian motion SDE using a change of variables. a) The full SDE. Decomposing this into the b) the drift term and c) the diffusion term, we see that both these quantities depend on $x$. d) We make the change in variable $u=\log[x]$ which maps the red lines in panels a-c) to the red lines in panels d-f). After this change, both e) the drift term and f) the diffusion term are constant in both $x$ and $t$. g) The resulting SDE is easy to solve by direct integration. Blue line is mean of solution for $x_0=-0.5$, shaded area represents $\pm 1$ standard deviation. h) We then transform back to the original variable to solve the original SDE.

Ornstein-Uhlenbeck process

Now we apply the same technique to the Ornstein-Uhlenbeck process, which has the general form:

\begin{equation}

dx = -\gamma x dt + \sigma dw,

\tag{33}

\end{equation}

Consider making the change of variables $\mbox{u}[x,t] = x \exp[\gamma t]$. When we apply Itô’s lemma:

\begin{equation}

du = \left(\frac{\partial u}{\partial t} + \frac{\partial u}{\partial x}\mbox{m}[x,t] + \frac{1}{2} \frac{\partial^{2} u}{\partial x^{2}}\mbox{s}[x,t]^{2} \right)dt + \frac{\partial u}{\partial x} \mbox{s}[x,t]dw,

\tag{34}

\end{equation}

which gives:

\begin{eqnarray}

du &=& \Bigl(\gamma \cdot x \exp[\gamma t] + \exp[\gamma t]\cdot -\gamma x + \cdot \frac{1}{2} \cdot 0 \cdot \sigma^2\Bigr)dt + \exp[\gamma t] \sigma dw\nonumber \\

&=& \exp[\gamma t] \cdot \sigma dw.

\tag{35}

\end{eqnarray}

The drift term of this equations is zero and the diffusion term depends only on $t$. We can integrate to give:

\begin{equation}

u[x,t] = x_0 + \int_0^t \exp[\gamma \tau] \sigma dw_\tau.

\tag{36}

\end{equation}

Now we substitute back in $\mbox{u}[x,t] = x \exp[\gamma t]$ and solve for $x$ to give:

\begin{equation}

x = x_0 \exp[-\gamma t]+ \exp[-\gamma t]\cdot \int_0^t \exp\bigl[\gamma \tau\bigr] \sigma dw_\tau.

\tag{37}

\end{equation}

The first term on the right hand side is deterministic. The second term is a stochastic integral. In part VI of this series of articles, we saw that the mean and covariance of an integral

\begin{equation}

\int_0^t \textrm{s}\bigl[\tau\bigr] dw_\tau,

\tag{38}

\end{equation}

are given by:

\begin{eqnarray}

\mathbb{E} \left[\int_0^t \textrm{s}[\tau] dw_\tau \right] &=& 0\nonumber \\

\textrm{Var} \left[ \int_0^t \textrm{s}[\tau]dw_{\tau}\right] &=& \int_0^t \textrm{s}^2[\tau] d\tau,

\tag{39}

\end{eqnarray}

respectively. Applying these results, we can see that:

\begin{eqnarray}

\mathbb{E}[x_t] &=& x_0\exp[-\gamma t]\nonumber \\

\mbox{Var}[x_t] & =& \exp[-2\gamma t]\cdot \int_0^t \bigl(\exp[2\gamma \tau] \sigma^2\bigr) d\tau\nonumber \\ &=& \exp[-2\gamma t]\cdot \frac{1}{2\gamma}\exp[2\gamma \tau]\sigma^2\biggr|_0^t\nonumber\\

\nonumber \\ &=& \frac{\sigma^2}{2\gamma}\Bigl(1-\exp[-2\gamma t]\Bigr),

\tag{40}

\end{eqnarray}

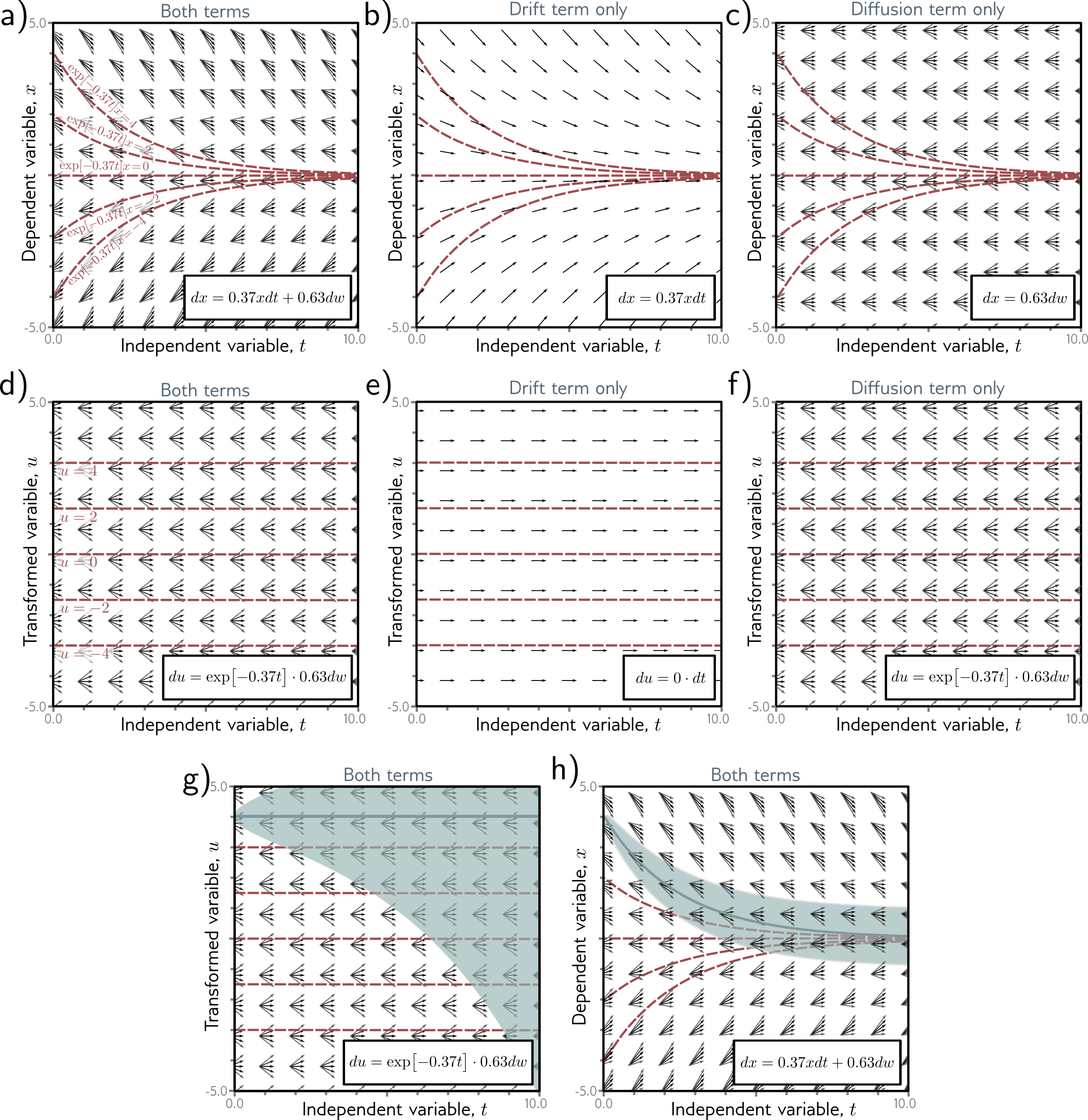

where the mean term comes from the deterministic drift term, and the variance comes from the second stochastic term. We have used the relation $\mbox{Var}[\alpha\cdot x] = \alpha^2\cdot \mbox{Var}[x]$ to account for the effect of the multiplicative factor $\exp[-\gamma t]$. This solution process is illustrated in figure 3.

Figure 3. Solving Ornstein-Uhlenbeck SDE using a change of variables. a) The full SDE. Decomposing this into the b) the drift term and c) the diffusion term, we see that the drift term depends on $x$. d) We make the change in variable $u=x\exp[-t/2]$ which maps the red lines in panels a-c) to the red lines in panels c-d). After this change, e) the drift term dissapears and f) the diffusion term is constant in both $x$ and $t$. g) The resulting SDE is easy to solve by direct integration. Blue line is mean of solution for $x_0=-0.5$, shaded area represents $\pm 1$ standard deviation. h) We then transform back to the original variable to solve the original SDE.

Since we saw that Wiener integrals are normally distributed, we can equivalently write the solution to the Ornstein-Uhlenbeck equation as:

\begin{equation}

x_{t} = x_0\exp[-\gamma t] + \frac{\sigma}{\sqrt{2\gamma}} W_{1-\exp[-2\gamma t]}.

\tag{41}

\end{equation}

Regardless of how we express the solution, it has a simple interpretation; for $\gamma>0$, the mean exponentially decays from the initial value $x_0$ to zero as $t\rightarrow \infty$ and the variance starts at zero and becomes equal to $\sigma^2/2\gamma$ as $t\rightarrow \infty$.

Conclusion

In this blog, we derived Itô’s lemma, which governs changes of variables in stochastic differential equations. We then used the lemma to help find closed-form expressions for solutions to the geometric Brownian motion SDE, and the Ornstein-Uhlenbeck process.

In the next part of this series (part VIII), we conclude our study of SDEs by introducing the Fokker-Planck equation which takes a stochastic differential equation and maps it to the ordinary differential equation governing the evolving probability density of the solution. We also consider Anderson’s theorem which allows us to reverse the direction of SDEs. Both of these results will become important when we discuss diffusion models.