This blog post is based on our paper accepted to IJCAI’s workshop: AI4TS: AI for time series analysis. Please refer to the paper What Constitutes Good Contrastive Learning in Time-Series Forecasting? for full details.

Investigating contrastive learning for time-series.

Self-supervised contrastive learning (SSCL) has shown outstanding performance in various domains, including computer vision (CV) [1], [2], [3] and natural language processing (NLP) [4], [5]. The technique utilizes unlabeled data to create positive (similar) and negative (non-similar) samples for learning representations. Recently, SSCL methods like TS2Vec [6] and CoST [7] have achieved success in time series data analysis.

In the realm of time series forecasting, Transformer-based models [8], [9], [10], [11] have consistently outperformed Temporal Convolutional Networks (TCNs) as backbones in end-to-end setups. However, it is intriguing that mainstream SSCL approaches for time-series forecasting still predominantly rely on TCNs. This raises an essential question: can Transformers be effectively used for contrastive learning in time-series forecasting?

Moreover, the typical SSCL approach for time series involves a two-step process: first, learning a representation using contrastive objectives on an in-domain dataset, and then training a regressor on the obtained representation using labeled data for the downstream task. It remains unclear if alternative end-to-end methods hold untapped potential. To gain a comprehensive understanding of the best strategies for utilizing SSCL in time-series forecasting and how they enhance performance, further research is crucial.

In our paper, we conduct a thorough investigation into the effectiveness of SSCL for time-series forecasting. Our exploration includes various SSCL algorithms designed specifically for time series, diverse learning strategies, and backbone architectures. We seek to address the following key questions:

- Which backbone model is most suitable for leveraging SSCL in time-series forecasting models?

- What constitutes the optimal learning strategy for effectively employing SSCL in time-series models?

- In what ways does the integration of SSCL enhance the performance of time-series forecasting models?

What constitutes good contrastive learning for time series forecasting?

Different from the pre-training in CV and NLP, the pre-training of time series usually uses the same dataset as the downstream task but without exploiting labels. Given a time-series dataset $\mathcal{D}={\{x_1, x_2, \dots, x_N}\}$ with $N$ instances, we train an encoder $\Phi(\cdot)$ to map each $x_i$ in its representation $r_i$ that can capture effective information for time-series forecasting, where each $x_i \in \mathcal{R}^{t \times m}$, $r_i \in \mathcal{R}^{t \times d}$, $t$ is the timestamp size, $m$ is the dimension of input signals, and $d$ is the hidden size of representations.

Which backbone model should be preferred?

We investigate the following three backbone architectures: (1) Long short-term memory network (LSTM) [12] is a variant of RNN, which processes input sequence recursively and regularizes information flow with “gates” (i.e., forget and cell gates). (2) Temporal Convolutional Network (TCN) [13] is a convolutional neural network for processing sequential data, which includes casual convolutions and dilated convolutions. Dilated convolutions enable TCNs to capture long-range dependencies in the input data while also maintaining a relatively small number of parameters. (3) Transformer [14] is a fully attention-based architecture. A Transformer consists of an input embedding layer and one or more Transformers layers. Each Transformers layer has a multi-head self-attention sublayer and a feed-forward sublayer. Self-attention enables Transformers to capture both local and global dependencies in the sequential data. In our experiments, we utilize the Informer [15] architecture that replaces vanilla multi-head attention with a sparse variant called ProbSparse self-attention to improve the model’s efficiency.

Which learning strategy is the best?

End-to-End Training. To train a model for time series forecasting, an MLP is added on top of the encoder $\Phi(\cdot)$, which takes the last $T$ hidden representations ${\{r_{N-T}, r_{N-T+1}, \dots, r_{N}}\}$ from $\Phi(\cdot)$ as inputs to predict the future $T$ observations ${\{x_{N+1}, x_{N+2}, \dots , x_{N+T}}\}$. The MLP head and encoder are trained on a supervised dataset with MSE loss (Eq. 1). We incorporate SSCL into an end-to-end training setup by using SSCL as an auxiliary objective. SSCL uses all representations ${\{r_{1}, r_{2}, \dots, r_{N}}\}$ to calculate the corresponding contrastive loss. We introduce a scale $\lambda$ to balance the MSE loss and contrastive loss.

\begin{equation}

\mathcal{L}_{i}^{MSE} = (x_i – \hat{x_i})^2 \tag{1}

\end{equation}

Two-Step Training. Previous work pre-trains an encoder $\Phi(\cdot)$ with SSCL first and then uses the pre-trained encoder as a feature extractor (i.e., keeping $\Phi(\cdot)$ frozen) to map input time series into latent representations. Different from the pre-training in NLP and CV, a time series encoder is usually pre-trained on the same dataset as the downstream task dataset due to the severe domain shifting issue in time series data. We evaluate the following settings. (1) Regressor. TS2Vec and CoST utilize the learned representations to train an external train linear regression model with L2 norm penalty to predict future $\hat{x} = {\{\hat{x}{N+1}, \hat{x}{N+2}, \dots , \hat{x}_{N+T}}\}$. (2) MLP. We train an MLP with MSE loss to predict $\hat{x}$ while using $\Phi(\cdot)$ as a frozen model. (3) Fine-tuning. While pre-training and fine-tuning learning framework has achieved impressive improvement on NLP and CV tasks, existing studies have not investigated its utility in time series forecasting. Thus, we add a new MLP head on top of the pre-trained encoder $\Phi(\cdot)$ and fine-tune them with MSE loss end-to-end.

Which loss function is the best?

We investigate two main SSCL algorithms and their variants. All the methods considered utilize the InfoNCE contrastive loss [16] but construct negative samples differently. Eq. 2 shows InfoNCE loss, where $sim(\cdot)$ is cosine similarity $\frac{h_i^\top h_j}{||h_i||\cdot||h_j||}$, $h_i$, $h_{p(i)}$, and $h_a$ are representations of an anchor, positive sample, and negative sample, respectively, and $Neg$ is a set of negative samples. We consider the following loss formulations. (1) Hierarchical Contrastive Loss (HCL) [6] aggregates representations of timestamps by using a max pooling with a kernel size of 2 and applies contrastive loss along the time axis, recursively. At the end of aggregation, i.e., each time series is represented in a vector, the model can also learn instance-level representations. (2) Momentum Contrast (MoCo1) [1] utilizes a momentum encoder to produce a positive sample and a dynamic memory bank to provide a large size of negative samples. MoCo provides an instance-level contrastive loss, which randomly selects a time stamp to represent the whole sequence. (3) MoCo2 [17] involves three components into MoCo1 to enhance SSCL, i.e., (i) replacing a feed-forward projection layer with a two-layered MLP with ReLU; (ii) more data augmentation techniques and (iii) a cosine learning rate scheduler. (4) HCL+MoCo2. We then combine the losses from HCL and MoCo with equal weight.

\begin{equation}

\small

\mathcal{L}{i}^{SSCL} = – \log \frac{e^{sim(h_i, h{p(i)})/\tau}}{e^{sim(h_i, h_{p(i)})/\tau} +\sum_{a\in Neg} e^{sim(h_i, h_a)/ \tau}}\tag{2}

\end{equation}

Experiments

Datasets

We conduct experiments on three real-world public datasets: the electricity transformer temperature (ETT) dataset [15], which includes both an hourly-level dataset (ETTh) and a 15-minute-level dataset (ETTm), and the electricity dataset (ECL) which contains the electricity consumption of 312 clients.

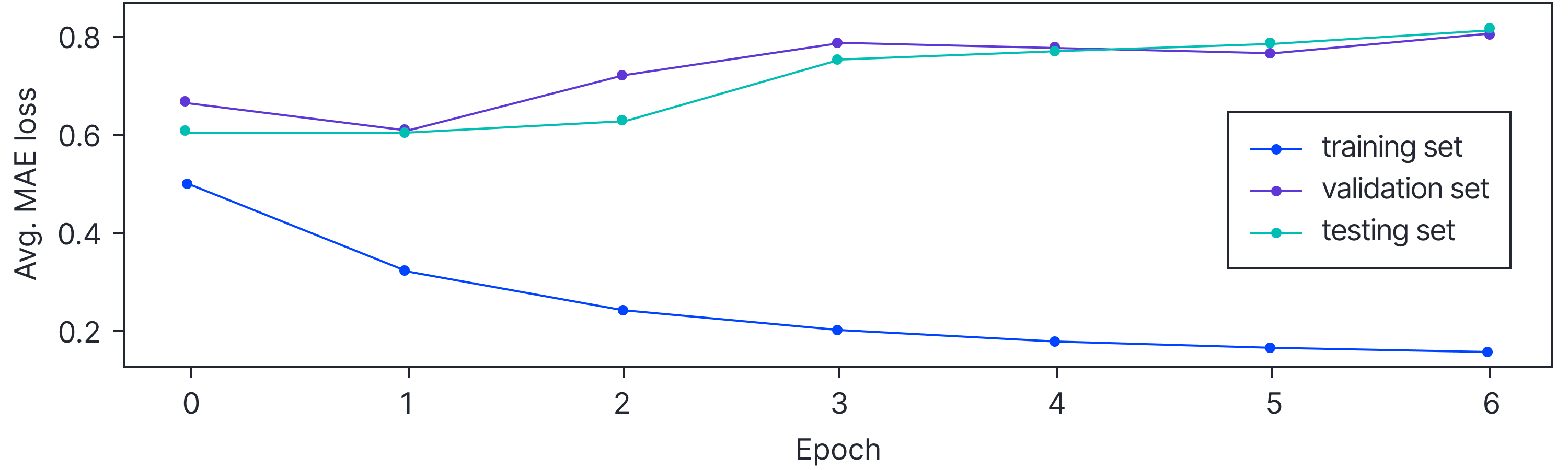

Figure 1. Informer with early stopping.

Figure 2. Training curves of Informer and TCN backbones on the ETTh1 dataset (prediction horizon $24$).

The ETT datasets consist of data from six power load features and measurements of oil temperature, while the ECL dataset was converted into hourly-level measurements following previous work [6].

We conduct our experiments for multivariate forecasting and use MSE as the main evaluation metric while also reporting the MAE results. To ensure an overall evaluation, we average the MSE across all prediction lengths and the three datasets to represent the overall performance of each model.

Table 1. Multivariate time series forecasting results of LSTM-based models.

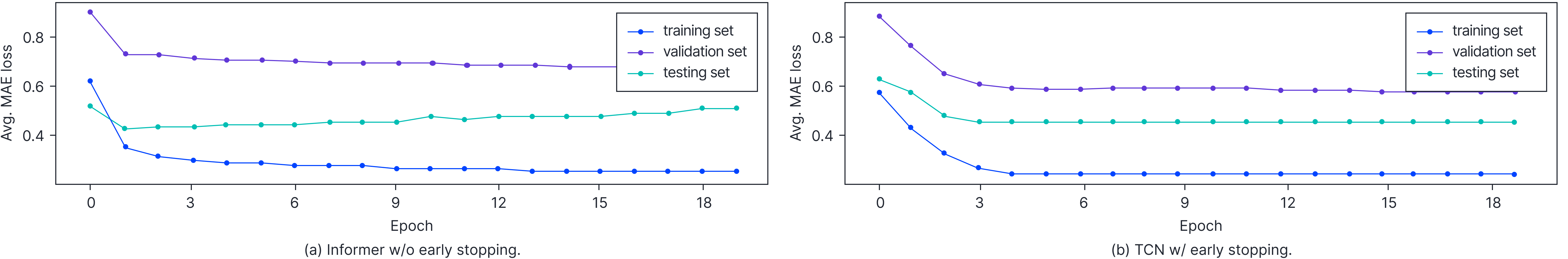

Table 2: Multivariate time series forecasting results of TCN-based models.

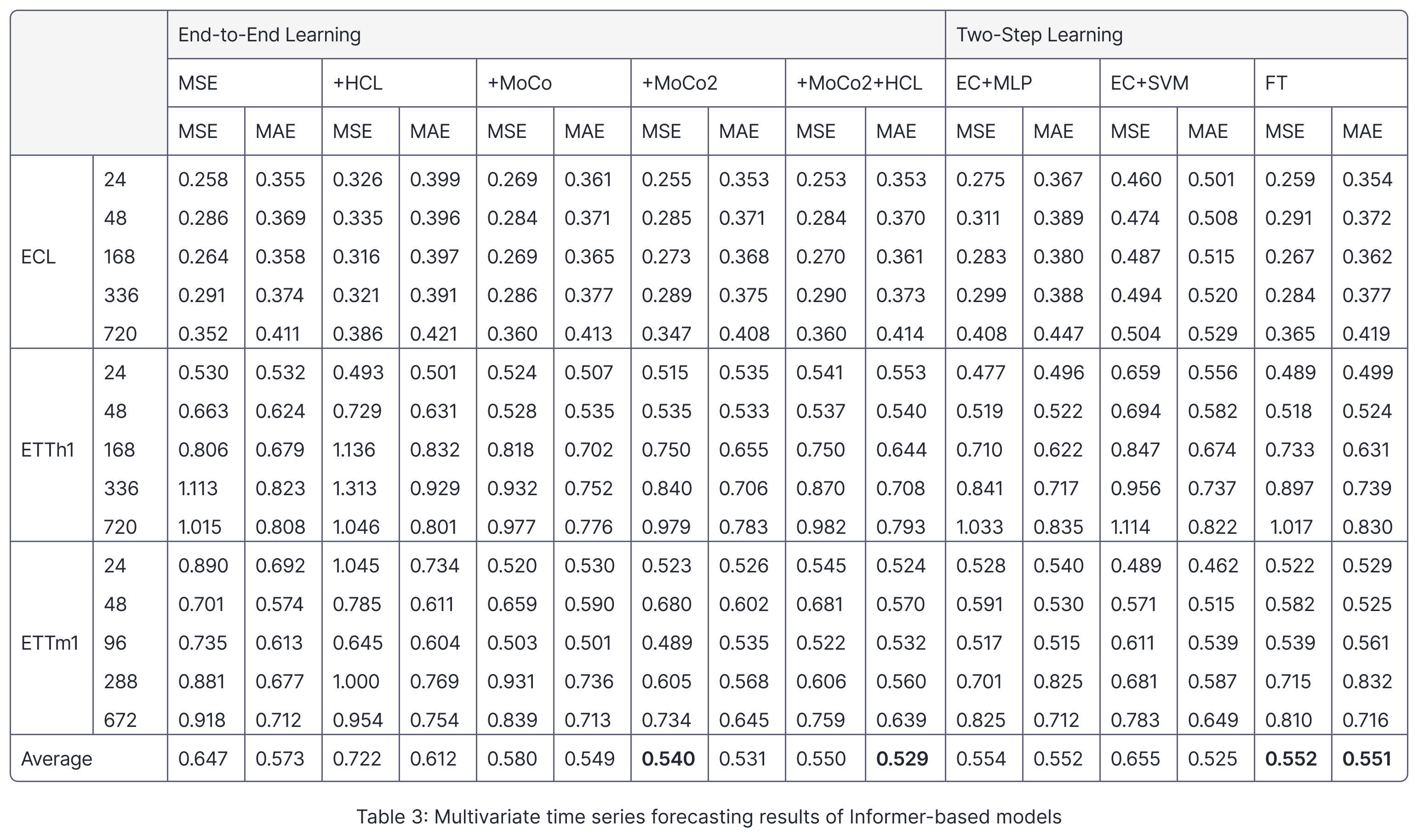

Table 3: Multivariate time series forecasting results of Informer-based models

Quantitative Analysis

In this section, we aim to answer the questions mentioned in Investigating contrastive learning for time-series. Our objective is to carry out a well-regulated study to answer each question.

Which backbone model works best for SSCL? To determine the most effective backbone architecture for exploiting SSCL in time series forecasting, we conduct experiments with three different encoder architectures: LSTM, TCN, and Informer. For each architecture, we train the model end-to-end with both MSE and MoCo2 losses, using SSCL as an auxiliary objective. Our results indicate that the Transformer-based model outperforms the other two LSTM- and TCN-based architectures, achieving a MSE of $0.540$. The Transformer-based model achieves a reduction of $0.260$ and $0.024$ MSE compared to LSTM-based and TCN-based models, respectively.

We observe that the performance of the Transformer backbone is more sensitive to the optimization hyperparameters used during training. The early stopping strategy, which starts with a relatively large initial learning rate, does not yield satisfactory results for the Informer backbone, as shown in Figure 2. In such cases, the training process may not converge effectively. However, it is worth noting that this strategy tends to be beneficial for other backbones. Properly training the network and realizing its full capacity heavily relies on addressing this sensitivity to the learning rate.

Which learning strategy or SSCL algorithm works best for SSCL? To determine the most effective learning strategy for SSCL, we compare different approaches using Informer-based models within the MoCo2 framework. We test the end-to-end learning strategy and a two-step learning strategy. Our results, shown in Table 2, indicate that end-to-end learning with SSCL as an auxiliary objective produces better performance, with an average MSE of $0.540$. This model also outperforms one trained solely with MSE loss. In end-to-end learning, we find that MoCo2 proves to be the most effective SSCL algorithm. In our comparison of the three approaches within the two-step learning strategy, we observe that fine-tuning produces the best results (MSE=$0.552$), outperforming the other two encode-frozen approaches.

How do backbones affect learning objectives or learning strategies? We now evaluate the effectiveness of SSCL in improving the performance of time series forecasting models with different backbone architectures. First, we compare different end-to-end training approaches, which include training with either solely MSE loss or joint losses (i.e., MSE and SSCL losses). Our results show that the LSTM-based model obtains a marginal improvement with MoCo2 and MoCo2+HCL. Furthermore, our investigation reveals that the TCN-based models yield substantial MSE reductions when integrated with MoCo2 and MoCo2+HCL. Specifically, the TCN-based models achieve reductions of $0.099$ and $0.097$ in MSE with MoCo2 and MoCo2+HCL, respectively. Among the various backbone models, the most effective approach involves training the Informer model with MSE and MoCo2 jointly. The Informer framework demonstrates superior performance with the best MSE metric of $0.540$ ($0.024$ lower than TCN) and the best MAE metric of $0.529$ ($0.011$ lower than TCN), respectively. These findings highlight the importance of considering the specific backbone architecture when implementing SSCL. While some models may benefit from SSCL, others might not show any noticeable improvement in performance.

Next, we compare various two-step learning approaches using different backbone architectures. Comparing results in Tables 1, 2, and 3, we can see that the Transformer encoder outperforms the other two backbone models when models are trained with pre-training and fine-tuning framework. The LSTM-, TCN-, and Transformer-based models attain test MSE values of $0.853$, $0.590$, and $0.552$, respectively. Across all backbones, the fine-tuning approach is found to be more effective than using a pre-trained model as a frozen encoder. Also, the LSTM-based model exhibits poor performance when used as a frozen feature encoder after pre-training (MSE$=1.070$). The SVM regressor consistently yields inferior results compared to the MLP prediction head.

Furthermore, our evaluation of different learning strategies reveals that the best-performing end-to-end learning model consistently outperforms the best two-step learning models, irrespective of the backbone used. We validate that adopting end-to-end learning with SSCL as an auxiliary objective on the Transformer model is the preferred approach. This method not only delivers superior performance but also proves more efficient and straightforward to implement.

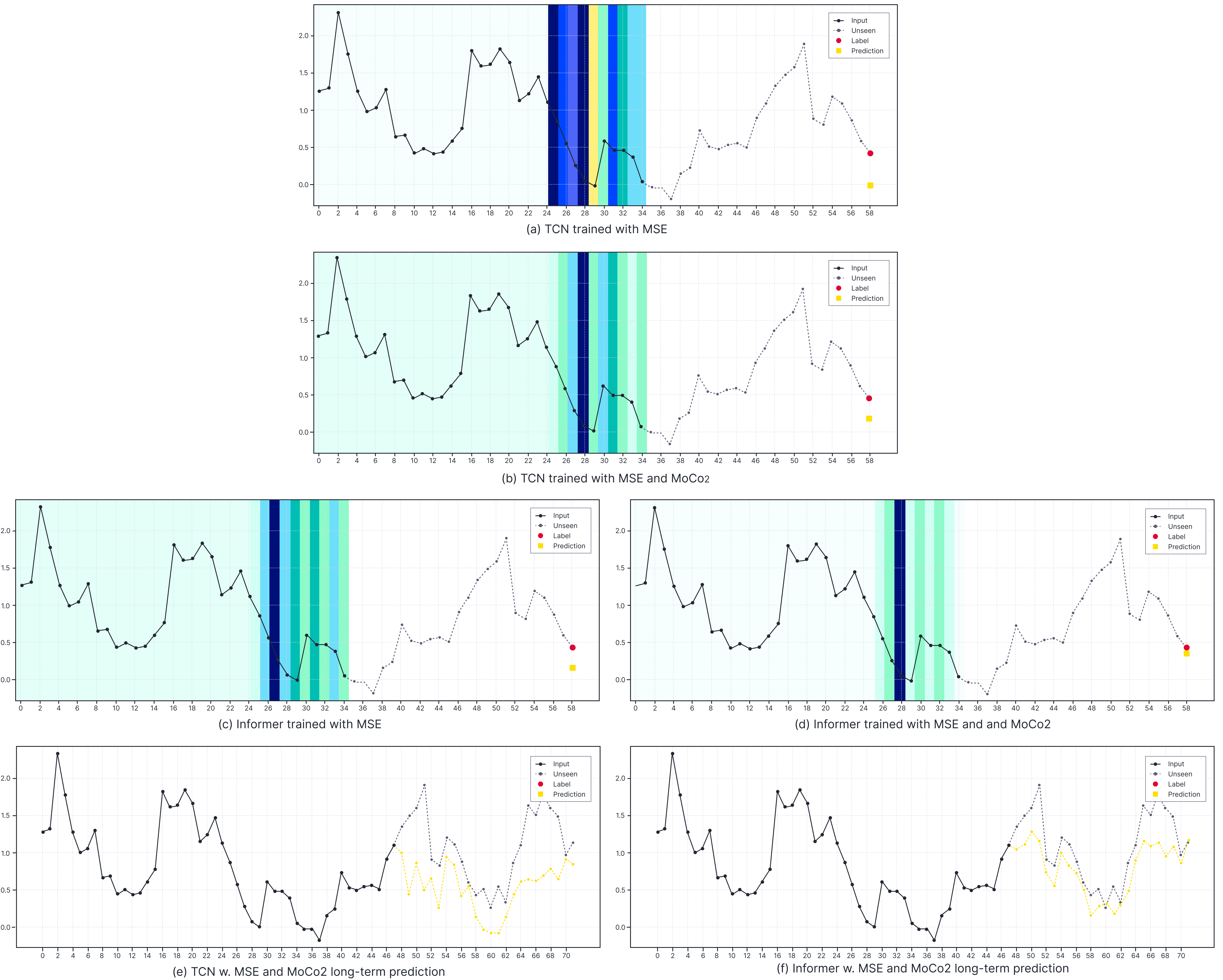

Figure 3. Comparison of the effective receptive field and long-term prediction of TCNs and Informers on ETTh1 dataset with a prediction length of $24$. The denser colour indicates a larger gradient to the input timestamp.

Qualitative Analysis

To gain deeper insights into how SSCL enhances time-series forecasting, we conducted a qualitative analysis of the empirical receptive field (RF) of a TCN, following the approach proposed by [18]. To carry out this analysis, we pass a validation set sample $x_i$ through the trained model and calculate the MSE loss between the model prediction $\hat{y}_i^j$ and the corresponding gold label $y_i^j$ at timestamp $j$, using Eq. 1. We then calculate the gradient of the input sequence $x_i$ with respect to the loss by applying back-propagation, which is formulated as:

\begin{equation}

\frac{\partial \mathcal{L}_i^j}{\partial x_i} = \frac{\partial(y_i^j-\hat{y}_i^j)^2}{\partial x_i}

\label{eq:gradient}

\end{equation}

As illustrated in Figure 3, SSCL allows both the TCN and Transformer models to concentrate more on related information, such as scale or periodic relationships, for forecasting, as compared to the vanilla models trained exclusively with MSE loss. Our analysis of Figure 3b and Figure 3d demonstrates that the models trained with SSCL exhibit a significant focus on the timestamp that exhibits a similar periodic pattern as the target timestamp. Furthermore, the TCN and Transformer models, trained with SSCL, effectively capture historical information associated with the scale or periodic pattern of the target timestamp. These findings underscore the effectiveness of SSCL in improving the performance of time-series forecasting models. Note that we only visualize the effective receptive field in Eq. 1 for a single time step prediction to avoid cluttered plots. We also draw a comparison of long-term predictions as depicted in Figure 3e and Figure 3f.

Key takeaways

In this blog post, we examined how different training factors affect the learning process for time series forecasting with SSCL.

Here is a summary of our key findings:

- Transformer-based models outperform TCN and LSTM-based models when leveraging SSCL for learning time series representations. Among the evaluated SSCL algorithms, MoCo2 proves to be the most effective in terms of performance.

- Integrating SSCL as an auxiliary objective within an end-to-end learning framework yields the best results for time series forecasting tasks.

- Importantly, our experiments demonstrate that training a Transformer model end-to-end with MSE loss, along with SSCL, leads to significant improvement.

- Additionally, our qualitative analyses highlight the ability of SSCL to capture historical information related to the target timestamp’s scale or periodic patterns.

We believe that these insights provide valuable guidance for utilizing SSCL in time series forecasting and will inspire future research in this field. For additional details, please refer to the full paper. We are pleased to announce that the paper has been accepted to the 2023 IJCAI workshop on time-series modeling.

References

1 – [He et al., 2020] He, K., Fan, H., Wu, Y., Xie, S., and Girshick, R. B. (2020). Momentum contrast for unsupervised visual representation learning. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR 2020, Seattle, WA, USA, June 13-19, 2020, pages 9726-9735. Computer Vision Foundation / IEEE.

2 – [Chen et al., 2020a] Chen, T., Kornblith, S., Norouzi, M., and Hinton, G. (2020a). A simple framework for contrastive learning of visual representations. In International conference on machine learning, pages 1597-1607. PMLR.

3 – [Kotar et al., 2021] Kotar, K., Ilharco, G., Schmidt, L., Ehsani, K., and Mottaghi, R. (2021). Contrasting contrastive self-supervised representation learning pipelines. In Proceedings of the IEEE/CVF InternationalConference on Computer Vision, pages 9949-9959.

4 – [Gao et al., 2021] Gao, T., Yao, X., and Chen, D. (2021). Simese: Simple contrastive learning of sentence embeddings. In Moens, M., Huang, X., Specia, L., and Yih, S. W., editors, Proceedings of the 2021 Conferenceon Empirical Methods in Natural Language Processing, EMNLP 2021, Virtual Event / Punta Cana, Dominican Republic, 7-11 November, 2021, pages 6894-6910. Association for Computational Linguistics.

5 – [Liu et al., 2021] Liu, F., Vulic, I., Korhonen, A., and Collier, N. (2021). Fast, effective, and self-supervised: Transforming masked language models into universal lexical and sentence encoders. In Moens, M., Huang, X., Specia, L., and Yih, S. W., editors, Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, EMNLP 2021, Virtual Event / Punta Cana, Dominican Republic, 7-11 November, 2021, pages 1442-1459. Association for Computational Linguistics.

6 – [Yue et al., 2022] Yue, Z., Wang, Y., Duan, J., Yang, T., Huang, C., Tong, Y., and Xu, B. (2022). Tsavec: Towards universal representation of time series. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pages 8980-8987.

7 – [Woo et al., 2022] Woo, G., Liu, C., Sahoo, D., Kumar, A., and Hoi, S. C. H. (2022). Cost: Contrastive learning of disentangled seasonal-trend representations for time series forecasting. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net.

8 – [Shabani et al., 2022] Shabani, A., Abdi, A., Meng, L., and Sylvain, T. (2022). Scaleformer: iterative multiscale refining transformers for time series forecasting. arXiv preprint arXiv:2206.04038.

9 – [Zhou et al., 2021a] Zhou, H., Zhang, S., Peng, J., Zhang, S., Li, J., Xiong, H., and Zhang, W. (2021). Informer: Beyond efficient transformer for long sequence time-series forecasting. In Proceedings of the AAAI conference on artificial intelligence, volume 35, pages 11106-11115.

10 – [Wu et al., 2021] Wu, H., Xu, J., Wang, J., and Long, M. (2021). Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting. Advances in Neural Information Processing Systems, 34:22419-22430.

11 – [Zhou et al., 2022] Zhou, T., Ma, Z., Wen, Q., Wang, X., Sun, L., and Jin, R. (2022). Fedformer: Frequency enhanced decomposed transformer for long-term series forecasting. In International Conference on Machine Learning, pages 27268-27286. PMLR.

12 – [Hochreiter and Schmidhuber, 1997] Hochreiter, S. and Schmidhuber, J. (1997). Long short-term memory.Neural computation, 9(8):1735-1780.

13 – [Bai et al., 2018] Bai, S., Kolter, J. Z., and Koltun, V. (2018). An empirical evaluation of generic convolutional and recurrent networks for sequence modeling. CoRR, abs/1803.01271.

14 – [Vaswani et al., 2017] Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, L., and Polosukhin, I. (2017). Attention is all you need. In Guyon, I., von Luxburg, U., Bengio, S., Wallach, H. M., Fergus, R., Vishwanathan, s. V. N., and Garnett, R., editors, Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, December 4-9, 2017, Long Beach, CA, USA, pages 5998-6008.

15 – [Zhou et al., 2021b] Zhou, H., Zhang, S., Peng, J., Zhang, S., Li, J., Xiong, H., and Zhang, W. (2021b). Informer: Beyond efficient transformer for long sequence time-series forecasting. In Thirty-Fifth AAAI Conference on Artificial Intelligence, AAAI2021, Thirty-Third Conference on Innovative Applications of Artificial Intelligence, IAAI 2021, The Eleventh Symposium on Educational Advances in Artificial Intelligence, EAAI2021, Virtual Event, February 2-9, 2021, pages 11106-11115. AAAI Press.

16 – [Chen et al., 2017] Chen, T., Sun, Y., Shi, Y., and Hong, L. (2017). On sampling strategies for neural network- based collaborative filtering. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Halifax, NS, Canada, August 13 – 17, 2017, pages 767-776. ACM.

17 – [Chen et al., 2020b] Chen, X., Fan, H., Girshick, R. B., and He, K. (2020b). Improved baselines with momentum contrastive learning. CoRR, abs/2003.04297.

18 – [Luo et al., 2016] Luo, W., Li, Y., Urtasun, R., and Zemel, R. S. (2016). Understanding the effective receptive field in deep convolutional neural networks. In Lee, D. D., Sugiyama, M., von Luxburg, U., Guyon, I., and Garnett, R., editors, Advances in Neural Information Processing Systems 29: Annual Conference on Neural Information Processing Systems 2016, December 5-10, 2016, Barcelona, Spain, pages 4898-4906.